If only one input Tensor is given, feat_src and feat_dst would be equal as follows,

h_src = h_dst = self.feat_drop(feat)

feat_src = feat_dst = self.fc(h_src).view(

-1, self._num_heads, self._out_feats)

# ...

el = (feat_src * self.attn_l).sum(dim=-1).unsqueeze(-1)

er = (feat_dst * self.attn_r).sum(dim=-1).unsqueeze(-1)

graph.srcdata.update({'ft': feat_src, 'el': el})

graph.dstdata.update({'er': er})

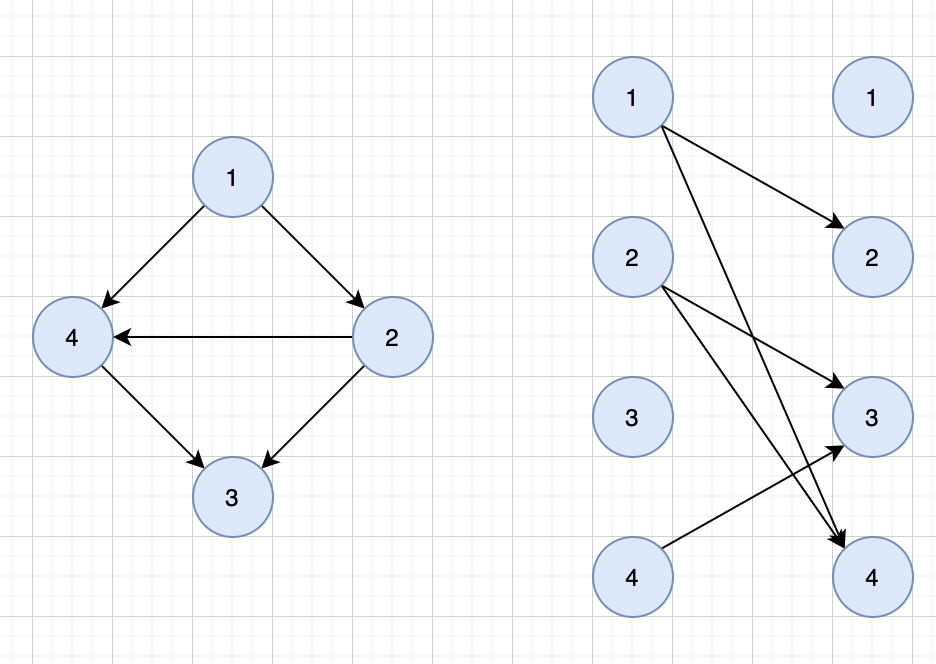

My question is, if graph.srcdata and graph.dstdata represents data for all src&dst nodes, they should have different shape like [num_src_nodes, hidden_dim] and [num_dst_nodes, hidden_dim], how can they have equal shape?

Now it make sense, but this should be added to the docs since this property easily causes misunderstanding (at least to me).

Now it make sense, but this should be added to the docs since this property easily causes misunderstanding (at least to me).