Hi, Firstly, thank you very much for your reply ! may I please describe a little more clearly

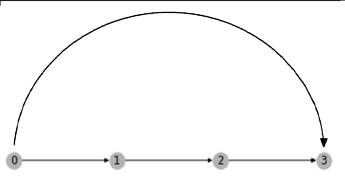

src = np.arange(9)

dst = src + 1

src = np.append(src, [0,2,5])

dst = np.append(dst, [2,5,9])

g = dgl.graph((src,dst))

g.edges()

(tensor([0, 1, 2, 3, 4, 5, 6, 7, 8, 0, 2, 5]),

tensor([1, 2, 3, 4, 5, 6, 7, 8, 9, 2, 5, 9]))

tt = torch.arange(12).repeat(3,1)

g.edata['x'] = torch.permute(tt, (1,0))

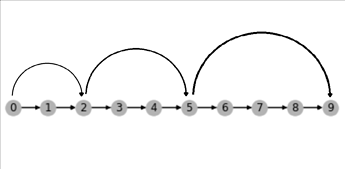

nodes = g.nodes()

g0 = dgl.node_subgraph(g, nodes[0:3])

g1 = dgl.node_subgraph(g, nodes[2:6])

g2 = dgl.node_subgraph(g, nodes[5:])

print(g0.edata['x'].shape)

print(g1.edata['x'].shape)

print(g2.edata['x'].shape)

torch.Size([3, 3])

torch.Size([4, 3])

torch.Size([5, 3])

if I want any edge between arbitrary distance [ src and dst ] in directed graphs to :

- find the path between src and dst and concat every edges features and pass through the same linear layer to have shape torch.Size([1, 3]) to update the big edge. can I pad all of them to some same length ?

def Pad(ii):

ee = ii.edata['x']

ee = F.pad(input=ee, pad=(0,0,0,7 - ee.shape[0]), mode='constant', value=0)

return ee

print(Pad(g0).shape)

print(Pad(g1).shape)

print(Pad(g2).shape)

torch.Size([7, 3])

torch.Size([7, 3])

torch.Size([7, 3]) ->> self.Linear = nn.Linear(7,1)

then can update the big edges with it … ?

or something like

class CClass(nn.Module):

def __init__(self):

super(CClass, self).__init__()

self.weight = nn.ModuleDict()

for _ in range(10):

self.weight[str(_)] = nn.Linear(_,1)

def forward(self,G, edata):

num_edges = G.num_edges()

oo = self.weight[str(num_edges)](edata)

return oo

or does this kind of operation make sense to graphs engineers…,…

Thank you very much for your time in reading and assisting my question