I wanted to open a topic to seek a little bit of help since I am working with huge graphs for graphs classification (they have in the order of million nodes).

I suspect it is not feasible memory-wise doing this with around 1k observations for classification so I am looking for some solutions.

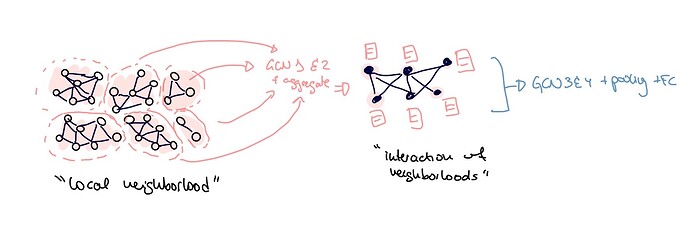

One of my first intuitions was to combine “very local features” with “higher level features” from the graph. Therefore, training a GNN with some Graph Convolutions in two different scales where the input of the convolution layers in the higher scale is the result of the “very local features” in terms of node features (figure attached).

How to define the “very local” neighbourhood is still a challenge but would this make sense from the GNN perspective? Have you seen this in any reference paper? And do you have any intuition of how to build such thing in DGL?

Any other solution for working with such large graphs in the graph classification setting is hugely appreciated!